Several weeks ago, Meta held an event to showcase its new Meta AI, built on its new Llama 4 Large Language Model. This has become a rite of spring for companies like Google (which begins its analogous Google I/O event later today), Microsoft, and Apple, but it’s a first for Meta. For me, it was a revelation, and when I brought all the pieces together it wove a surprising tapestry of implications that made my head explode for days afterward. Last year I wrote that Meta was likely skipping the current generation of top-level AI agents in favor of leading the pack when AI glasses gave them an edge in collecting data, but I was dead wrong. They weren’t skipping anything…

Meta is quietly but resolutely reshaping the notion of what a personal, top-level AI assistant can be. While many companies are racing to embed artificial intelligence into productivity tools, search engines, and operating systems, Meta is building something more intimate—and unimaginably more powerful: an AI that knows you deeply, behaves socially, and operates continuously—across time, platforms, and even hardware. The implications are both profound and unsettling.

Meta sees emotional intelligence—not just analytical power—as central to the future of AI. The long-term vision? An assistant that develops a “coherent theory of mind”: an understanding not just of what we want, but how we feel and how we relate to others.

From Search Tool to Social Companion

Mark Zuckerberg has made it clear that Meta AI is not just a tool—it’s a presence. In recent interview with technology strategist Ben Thompson of Stratechery, he’s described it as “your personal AI”, one that’s deeply embedded in the rhythms of everyday life. “Meta AI just knows stuff about you from using our apps,” he noted, hinting at tight integration across the Facebook family: Instagram, WhatsApp, Messenger, Threads, and beyond. Way beyond, as we’ll discuss in a minute.

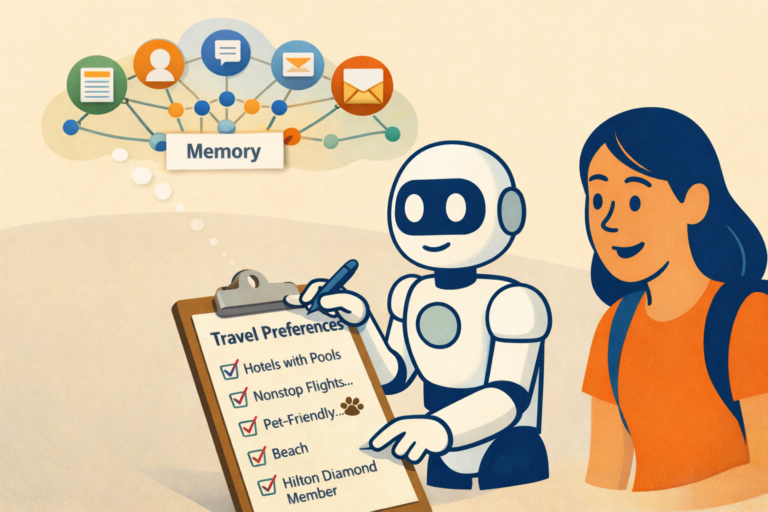

This isn’t merely about personalization in the superficial sense—suggesting songs you might like or ads for products you might want. Meta AI is designed to remember conversations, track behavior, and infer user preferences, much like a friend might. And that’s by design. It will then combine all of those data points to create what will undoubtedly be the deepest, broadest, and most accurate profile ever drawn for each of its users.

Zuckerberg has spoken openly about AI’s potential to fill emotional and relational roles. From helping people be “better friends” to offering companionship or even therapy, Meta sees emotional intelligence—not just analytical power—as central to the future of AI. The long-term vision? An assistant that develops a “coherent theory of mind”: an understanding not just of what we want, but how we feel and how we relate to others. Think about what Meta might be capable of if it actually knows you better than you know yourself.

What Fuels This Vision: Training, Data, and Steerability

Unlike other open-source LLMs designed for general-purpose use, Meta’s Llama 4 was trained first and foremost to serve internal goals: advertising, recommendation, messaging, and moderation. Developers may access its models, but the DNA of the system is optimized around Meta’s commercial and social ambitions.

One key feature of Llama 4 is “steerability”—Meta’s ability to adjust how the model behaves in different contexts. This isn’t just about tone or formatting. Steerability means the AI can be fine-tuned to support specific business outcomes, such as persuading a user, maximizing engagement, or converting a sale. In other words, Meta can tilt the model’s behavior in ways that are strategic for the company and its advertisers, yet invisible to the consumer.

The data foundation is unmatched. Meta AI draws from the vast behavioral archives of its platforms: likes, chats, photos, reels, reactions, purchases, groups, and friendships. It’s a living record of billions of human social interactions that can be used to influence consumer behavior. For example, in her recent book “Careless People: A Cautionary Tale of Power, Greed, and Lost Idealism,” author (and former Facebook executive) Sarah Wynn-Williams details how Facebook tracked teenage girls’ emotional states, including when they deleted selfies, and used this information to target them with ads, according to an analysis of the book by the Institute for New Economic Thinking. Specifically, she mentions that Facebook tracked interactions and body image concerns to drive engagement and even worked with beauty companies to target girls right after they deleted selfies. This successful targeting was based on a single incremental signal of the deleted selfie; imagine how much more powerful it might be with signals from personal conversations with a Meta AI ‘therapist.’

This brings us to the ‘why’ of it all: persuasion, and profit. I’ve written before about how a few of the biggest technology companies like Google, Meta, OpenAI, and Apple will build top-level AI agents that will be able to provide consumers with highly personalized recommendations for everything from career and personal choices to which running shoes to buy. Hopefully some will offer recommendations matched to our genuine needs and optimize for the consumer, but others may optimize purely for profit, recommending (and successfully persuading) the purchase most valuable to the AI company and its advertisers rather than to the consumer. We’ve seen how even the order of a listing can nudge consumers to choose one hotel or airline over another; imagine how forcefully an agent like Meta AI could be in persuading people to purchase it’s choices. What value and power would a travel brand, or any brand, have in this situation?

Meta AI will have access to unmatched amounts of relevant consumer data to shape consumer desires, but Meta also wants to enable Llama (the LLM behind Meta AI) to become self improving. In their “Welcome to the Era of Experience” research paper, David Silver and Richard S. Sutton outline the next frontier: AI agents that learn by doing, not just from initial training. According to the paper, requirements for self improvement include real-time sensory input (the stream of experiences) from devices like Meta’s smart glasses, which will allow the AI to see, hear, and remember from a first-person perspective, along with a memory function, which Meta has already announced for Llama. It’s not imitation, regurgitating the information from its initial training; it’s embodied, interactive learning—driven by trial and error. Meta AI will learn on its own, training and improving itself minute by minute.

While Google mapped the web, Meta is trying to map the self: our personalities, routines, moods, relationships, and evolving preferences. With AI at the center, the goal is not just to reflect who we are—but to shape who we become, moment by moment, choice by choice.

Seeing Through Your Eyes—Literally

Zuckerberg has framed smart glasses (dad pun) as the ultimate AI interface, surpassing phones or laptops. The reasoning is simple but insanely powerful: “With glasses, you can let your AI assistant see what you see and hear what you hear… it’s hard to imagine a better form factor.”

This vision assumes total stack ownership—Meta will build both the assistant and the glasses, optimizing the handoff between software and hardware. That tight integration could be a source of strategic advantage, but it also raises important questions about surveillance, data control, and autonomy.

The company appears to be moving full speed ahead on this front. According to a May 7 report in The Information, Meta has resumed work on facial recognition technologies for its glasses, following a pause driven by earlier privacy concerns. Meta apparently believes these privacy concerns needn’t impede their path. These new capabilities would enable wearers to identify people in their field of view—and allow Meta to do the same.

So what happens when a device on your face knows not just who you are, but who you’re talking to, where you are, how you feel, and what you (and others who have been identified) say—all in real time?

Cataloguing the Human Experience

What sets Meta apart isn’t just scale. It’s the nature of the data it collects and the ambition with which it intends to use it. While Google mapped the web, Meta is trying to map the self: our personalities, routines, moods, relationships, and evolving preferences. With AI at the center, the goal is not just to reflect who we are—but to shape what we believe and who we become, moment by moment, choice by choice.

This isn’t just about a smarter search engine or a more helpful assistant. It’s about embedding a semi-autonomous, emotionally aware intelligence into the fabric of daily life—always listening, always watching, always learning.

Whether that vision is thrilling or terrifying—or both—may depend on how much we trust the hand guiding the AI behind the curtain.

A Pause for Reflection

Meta’s AI assistant isn’t just another product launch. It’s an inflection point. It reframes what it means to have a “personal assistant,” not as a tool that waits for commands, but as a companion that anticipates, advises, and even emotes. It reflects a broader shift in the AI world—from reactive tools to agentic systems that observe, adapt, act…and persuade.

And let’s remember that the same technology that can sell you a pair of shoes can also be used to sell you on an ideology. Let’s let that sink in.

Clearly, this power comes with gravity. When an AI knows everything you do, hears everything you say, and remembers everything you’ve felt—who does that data really belong to and who should have the ethical charge to use it? What kind of future are we building when we invite an unseen presence to accompany us through every moment of our lives? Is that a rational choice?

Meta is betting that billions will say yes.